(Founder of Blur Busters here)

Short Question:

How do I make TargetTimeElapsed microsecond-accurate as possible?

…Making the next Update()/Draw() cycle called right exactly on time, not too early, not too late, better-than-millisecond granularity.

Long Form Explanation:

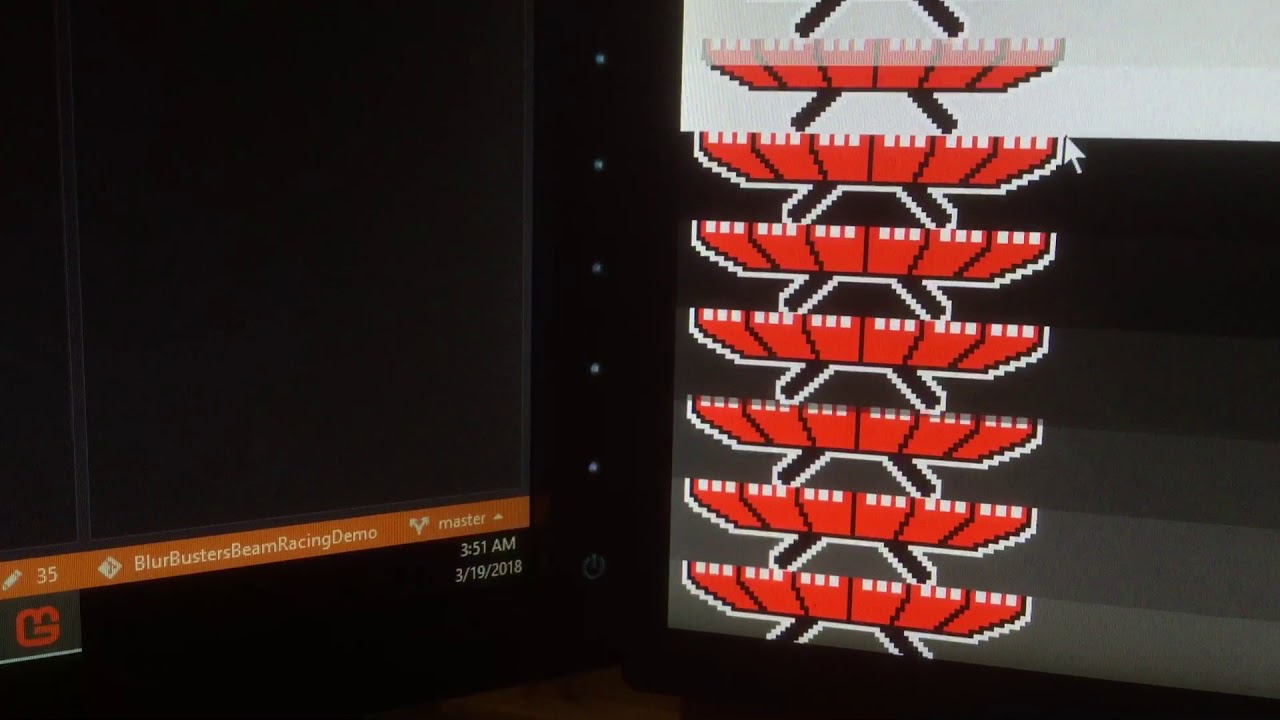

I have a special beam-racing application that I created in MonoGame (see YouTube of mouse arrow dragging the exact position of a VSYNC OFF tearline) – and I’m trying to create the world’s first cross-platform beam racing demo for Blur Busters using the MonoGame engine.

On the PC, the game.TargetElapsedTime is very microsecond accurate. Once I set it, the next Update/Draw cycles are called right on the dot, almost to the exact microsecond. Fantastic. Beam racing success.

…Look at that! Mouse dragging the exact position of a VSYNC OFF tearline! Old skool beam racing… (using 100% pure MonoGame APIs to simulate raster interrupts, with no raster register – just precision clock math as an offset from VSYNC timestamps).

MonoGame retweeted this recently, see https://twitter.com/BlurBusters/status/975646569528811520

However, on my laptop, game.TargetElapsedTime is only millisecond accurate. If I do huge hacks and directly Present() / Flush() on QueryPerformanceCounter(), it works. But I’d rather MonoGame do an optional ultra precision microsecond-accurate frame pacing mode (even at more battery consumption) – where TargetElapsedTime precisely schedules the next Update/Draw to within approximately a 10-microsecond accuracy like I can do via other laptop hacks (direct polls of QueryPerformanceCounter() instead) – that’s not very MonoGamey.

How can I make MonoGame trigger the next Update() call to better-than-millisecond granularity? I need to do this for beam-racing on more platforms (laptops, Linux, Mac with beamsync OFF / VSYNC OFF, etc).

This is some current research work towards lagless VSYNC ON modes being added to some emulators recently (synchronizing emulator rasters to real-world raster – now successfully implemented in WinUAE and GroovyMAME)

Help?

Thanks so much,

Mark Rejhon

Founder, Blur Busters