I assume the tests just use the “default” graphics adapter, which I think is determined by the OS. I just set the default to my NVIDIA GeForce GTX 960M and reran the tests. @Tato are you disabling IsFixedTimeStep and VSync? I’m getting ~62 fps for all tests now which seems too consistent

That would affect the results below 60 as well. If it takes 18ms to draw a frame, it will simply fall to 30fps.

Oh right. Then on my NVidia GPU performance is just very much the same for these platforms…

Not to brag, but to be fair I got around 62fps on all of them except MonoGameGL, in which the fps was hovering around 54.

@Surgecrafter

It seems that those tests have VSync locked so they are not a good test to compare performance. The frame rate can never be more than 60fps and often you will get values like 45,30,15 fps, those do not represent actual performance. Also look at the sample code above, I don’t think the frame rate counter is 100% correct (62fps can’t be real).

As for the real issue of performance, MG still has plenty of room to improve on Spritebatch. There are a couple of PRs and discussions going on at the moment.

The SpriteBatch does have a few quirks but they greatly improved it with the MonoGame 3.5 update

You guys should remember one thing that will give inconsistent results across spritebatch tests.

It has far less to do with xna or monogame or which card you have because they will all get affected.

Spritebatch doesn’t mipmap draws or drawstrings automatically and that is the number one cost of drawing sprites in batches or immediate mode. There is a pretty big penalty for down scaling and the more down scaled each draw is the bigger the hit this is fundamental to how the math works on rasterizing pixels which involves blending from source to destination that can get very expensive.

Try this test load up a 2k x 2k image and draw it a few hundred times to a rectangle with the proportions of (0,0,128,128) pixels.

Then do the same with a image that is exactly the same size as the rectangle.

Observe the frame rate of each test.

After that think about the screen mode resolution of the test between two different computers.

You also have other things that can affect stuff like antialiasing and how you do your alpha blending.

I could pump out over 500 or more sprite batch draws in xna at 60fps (if i remember right quite a lot more) i could probably do it here with monogame, if i followed only the rule of thumb never ever downscale images without the other stuff.

With text each letter is a draw and if your text is down scaled that’s a big hit.

While measure string is crap the way it works with drawstring thats from xna’s design and in my opinion Drawstring in xna wasn’t very hot

I actually have been working on a replacement spritebatch drawstring class on and off for sometime now.

Btw…

vsync won’t actually impact a frame rate test because vysnc is actually the monitors refresh timing signal unless your running on a very old tv your monitor should be capable of at least 120 frames per second newer ones even more but the signal itself is utra fast like in the .02 millisecond range vs a frame rate in the micro second range. eg it will only really have a effect when your frame rate gets close to your monitors maximum ability to output frames.

Sorry for the necro.

I’m using FNA and also found out that MonoGame is somewhat slower than it, but there was an improvement in 3.6 so I guess the gap will close soon. You can’t compare OpenGL vs DX but using the DX backend I found the spritebatcher in MG to be slightly slower than XNA too (on 5 rigs).

However, while these benchmarks are important if for example you’re looking for the absolute bestest performance for a 2D game that uses a massive amount of sprites (ASM anyone?  ), overall these benchmarks are all kinda bullshit, and that’s the subject of an article I’m writing at the moment, which I’ll publish soon.

), overall these benchmarks are all kinda bullshit, and that’s the subject of an article I’m writing at the moment, which I’ll publish soon.

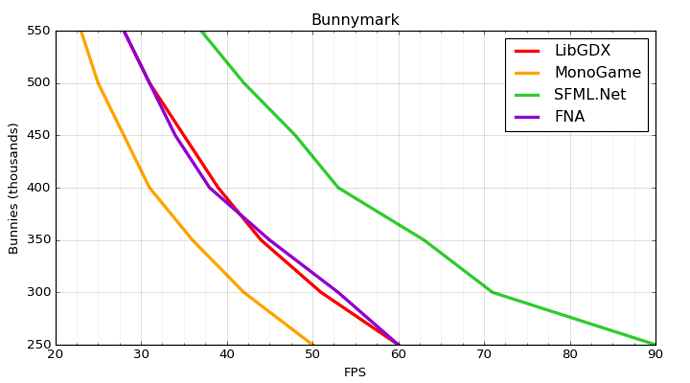

To prove my point that some benchmarks cough are bullshit, I made some quite decent bunnymark code (which generally isn’t the case, they generally suck), and compared naive spritebatch drawing in LibGDX with MG and FNA and also made a custom high performance sprite batcher in SFML.Net that blew them all outta the water, just to show how you can get any results you want for these benchmarks, and you don’t even need to make them up, just be a bit shady with your bunnymark implementations and you get any numbers you want from them. Here are the numbers:

And here is the link to download binaries (Win64): http://alangamedev.com/wp-content/uploads/2017/05/Bunnymarks.7z… I wonder why people publishing benchmark results never release the binaries so you can easily verify that for yourself. The code will be available in the article but it’s nothing special and the Java version is exactly the same mathematically, just using LibGDX coords. The C# versions are identical except of course for the spritebatcher implementation they use. NOTE: Disable vsync in your control panel because in windowed mode these things suck and disabling it in code didn’t work properly and I was lazy to figure out why

.

.Another point to consider is what exactly you’re benchmarking, is it the GPU fillrate? CPU for geometry sorting and merging? Bus? There are many variables and they’re all gonna be addressed in detail in the article I’m writing, but what you can safely conclude is that except for a single use case these benchmarks are bullshit, and if you absolutely need that level of performance for that single use case you’ll want to optimize that bottleneck manually anyway.

Of course more performance is better though

.

.

EDIT: Oh, by the way… again, it’s not valid to compare DX vs OpenGL, but that statement that XNA is faster than FNA is not accurate in >99% of cases… in any modern system with decent drivers you can expect FNA to absolutely blow XNA outta the water, no doubt about it.

I’ve setup a testbed project to benchmarks and compare the performance on the latest versions of MonoGame, Fna, Kni, Xna.