If i follow, you mean to map the position as if it were a window directly onto the image.

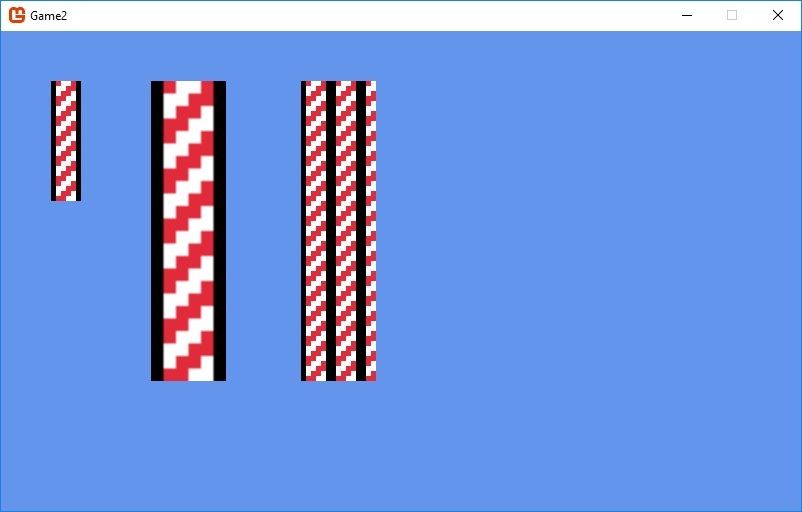

As if that image repeats virtually like it did so in some imaginary space filed with these tiles end to end ?.

As far as i know (unless there is another way using the minification filter and wrap or something ?)

You would have to do those calculations yourself to map each rectangle.

It’s not a trivial thought process either.

The mapping itself is actually simple enough, i.e. you simply base uv coordinates on your actual draw position.

However it will get complicated because each rectangle would have to be split into as many as 4 parts. Each with its own re-mapping to draw positions and texture coordinates to the imaginary texture space and for both start and end coordinates as well.

In short you must keep a actual rectangle into the this imaginary texture space as you move positionally by destination you have to calculate new drawing rectangles and uv areas,

shwooo… if your going to seriously go with this, i might have a class or two that you can look at if i can find them.

but basically it goes something like this.

Lets say your texture is 100x100 width height

(it lives in a imaginary space filled with these back to back)

(well just pick a random draw area)

You want to draw to position 250 x 250 with a Width Height of 125 125 and a Right Bottom of 375 375

(well just calculate x you would do the same for y)

lets call the above draw destination rectangle the… drawArea

this is just a pseudo algorithm

dx = 250;

a = (int) ( 250 / 100) ; // a = x / texture.Width a is rounded down to 2

b = (int)(a * 100); // a is 2 2x100 = 200

u = dx - b; // 250 - 200 = 50 this is were on the texture we start our drawing.

uw = texture.Width - u; // the distance of our source draw

// The above is the u or texture source rectangles x position on the 100 x 100 texture.

// Since b was the start of the texture in the imagined texture space.

// We find the end to compare were our destination draw ends.

// if it is more then this texture spaces single texture area.

e = (b + texture.Width); // b is the start 200 e is the end 200 + 100 = 300 = e

if( drawArea.Riight > e)

{

// Were going to need another tile as well.

// you would need some sort of loop here to track how much of the drawArea you draw.

// wittling down that area till you know its drawn.

// we already have our first set of uv coordinates, and our destination coordinates.

// to review... drawArea is were we want to draw to on screen

// to draw one to one we need to split that up.

// were d denotes a spritebatch destination rectangle and s denotes a source rectangle.

x = drawArea.X;

xw = e - drawArea.X;

Rectangle d0 = new Rectangle(x , ... , xw , ... )

Rectangle s0 = new Rectangle(u , ... , uw , ... );

// this process is the same for y so doing this in vectors maybe simpler.

//our original drawArea is not completed because we are in this if statement.

x = drawArea.Riight - e;

u = 0;

// ect.... ect...

// of course this is just scratched out but this is the jist of it.

// this isn't fully done either but this process basically just repeats at this point....

// you'll have to devise methods to break up each part to keep it simple.

// its possible to do this in a loop as well.

}

When its all prototyped it can probably be compressed down to a small bit of code.

Simple math heavy logic.