Good day.

How can I draw my sprites at a lower resolution and keep their pixelated look? When I draw a sprite in my game at my desktop resolution of 1920x1080 in full-screen, it is sharp with no blur. However, drawing the sprite at game resolution 1600x900 in full-screen makes it blurry and mixes the colors. Drawing in windowed mode at any resolution keeps the expected sharpness.

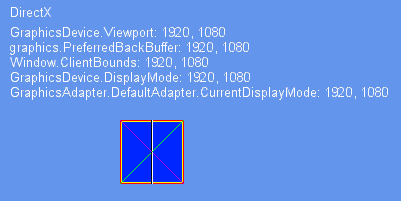

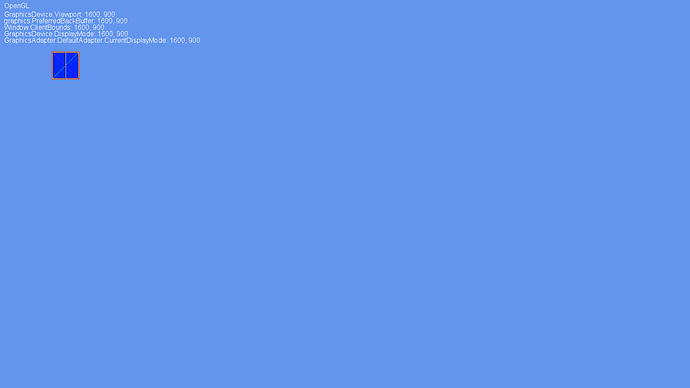

Here is the game at 1920x1080:

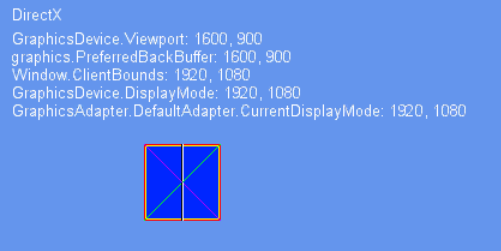

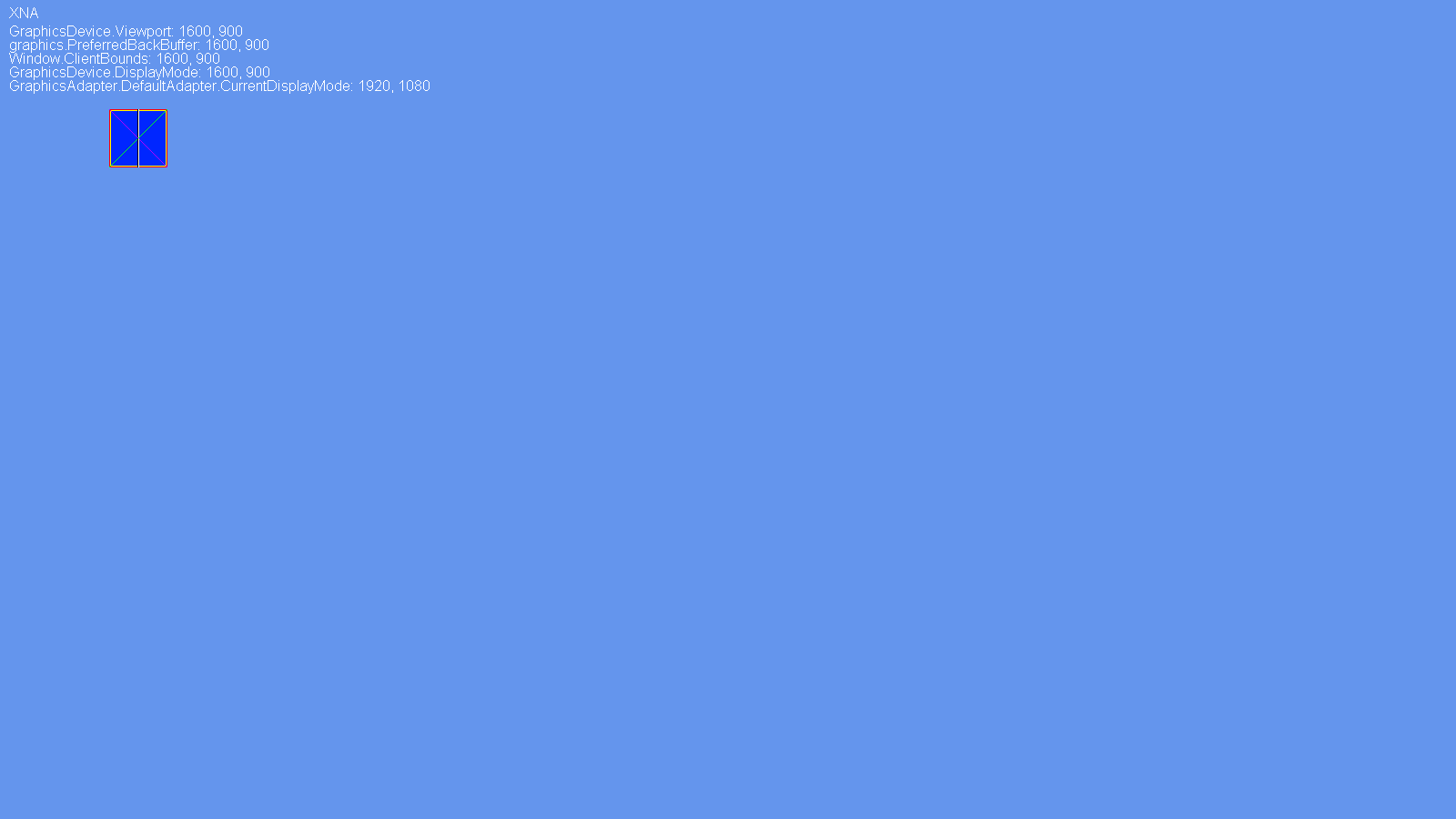

and here is the game at 1600x900:

Here is the relevant code:

-Constructor:

public Game()

{

graphics = new GraphicsDeviceManager(this);

graphics.HardwareModeSwitch = false;

graphics.GraphicsProfile = GraphicsProfile.Reach;

graphics.PreferredBackBufferWidth = 1600;

graphics.PreferredBackBufferHeight = 900;

graphics.IsFullScreen = true;

graphics.ApplyChanges();

IsMouseVisible = true;

Content.RootDirectory = "Content";

}

-Draw method:

protected override void Draw(GameTime gameTime)

{

GraphicsDevice.Clear(Color.CornflowerBlue);

spriteBatch.Begin(SpriteSortMode.BackToFront,

BlendState.AlphaBlend,

SamplerState.PointClamp,

null, null, null, Matrix.CreateScale(1, 1, 0));

{

spriteBatch.Draw(testTexture,

new Vector2(120, 120),

new Rectangle(0, 0, testTexture.Width, testTexture.Height),

Color.White);

#region draw text

...

#endregion

}

spriteBatch.End();

base.Draw(gameTime);

}

I’m using:

-Monogame version: 3.5.1

-MonoGame Windows Project

-Grahics: Intel HD 530

-OS: Windows 10

Also, when I worked with XNA it would keep the pixel sharpness, but I was using a different computer (Windows 7, integrated graphics).

Any help is welcome.

I cannot see how that was possible. You’re taking a 1600x900 buffer and stretching it to fill a 1920x1080 screen. Pixels are going to get stretched and filtered across pixel boundaries. The hardware (video card or display device) has to fill in the extra pixels and it does this by filtering the data.

Honestly to me it sounds he just started using wrong linear sampling instead of point, which would explain his problem atho his example code is using point. But it is pretty much only thing that can be causing this.

So PeekFreans, please provide full resolution screenshots so we can clearly see what is going on, posting resized screens when talking about pixel interpolation isn’t ideal.

Point filtering would provide sharp results after stretching, but also very uneven and blocky as some pixels are doubled and others are not.

Looks like dx or gl anti-aliasing is kicking in possibly.

rasterizerstate.MultiSampleAntiAlias = false;

samplerstate = SamplerState.PointClamp;

graphics.PreferMultiSampling = false;

You may like the results even less i prefer to simply make a extra font at lower size. Any time i have to scale down text then just switch to the lower sized font there is no mip-mapping option for text unfortunately.

Thank you everyone for your response.

I think the issue is that the game window doesn’t change to 1600x900 while full-screen, but stays at 1920x1080 which, like KonajuGames mentioned, stretches and filters the sprite. While getting the screenshots for Ravendarke I saw that the screenshot size for my 1600x900 game was 1920x1080 pixels in size. However, my XNA screenshot for my 1600x900 game was 1600x900 pixels in size. Also, I ran a MonoGame Cross Platform project with the same code and it worked great, no color blurring. The Cross Platform screenshot is also 1600x900.

Here are the uncropped screenshots. You may need to download the image and zoom in to see the sprite differences:

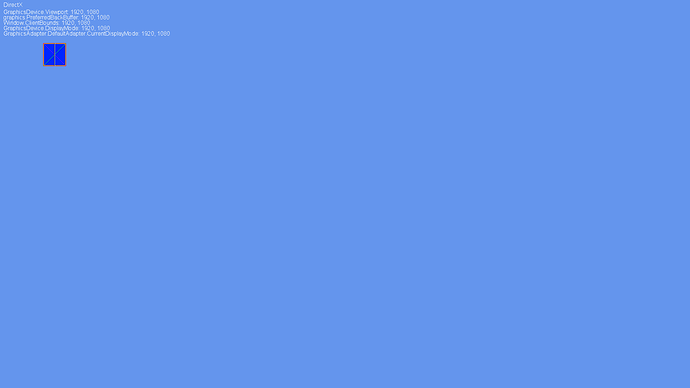

-Monogame Windows, full-screen at 1920x1080 (my desktop resolution):

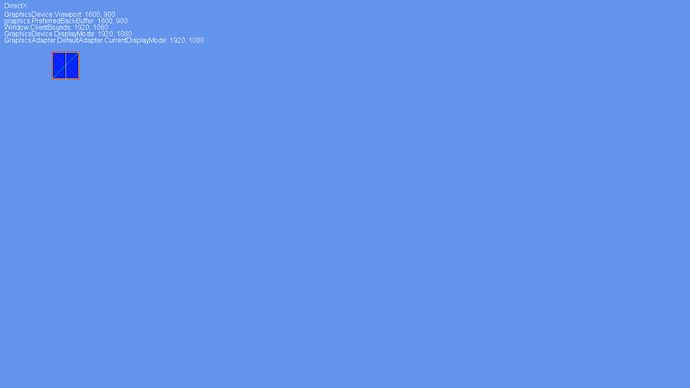

-Monogame Windows, full-screen at 1600x900 (notice the image dimensions):

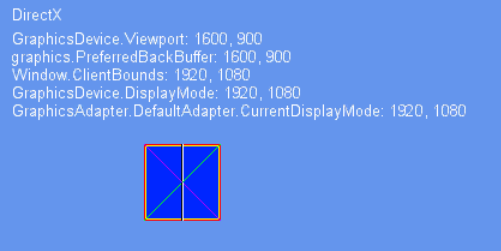

-Monogame Cross Platform, full-screen at 1600x900:

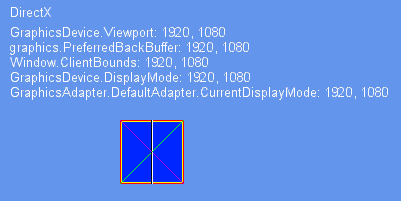

-XNA, full-screen at 1600x900 (taken from my other computer):

Is my wild guess about the game window not changing size the issue?

Ahh. I understand the issue now. The fuzzy is in screenshots, not on screen. This is because there are now two ways of doing full screen: hardware mode and full screen window. Hardware mode is the old way where it would change the output resolution of the display card. Full screen window is the newer way where a borderless window fills the current desktop and the output buffer (at the game’s selected resolution) is rendered to fill the window. This is much more friendly to modern desktop compositing systems. Hardware mode change is not even possible on systems such as Linux and Mac, and even on Windows Store Apps and UWP.

There is a property on GraphicsDeviceManager called UseHardwareMode (from memory) that you can set to force a hardware mode change. This is what you will be looking for.