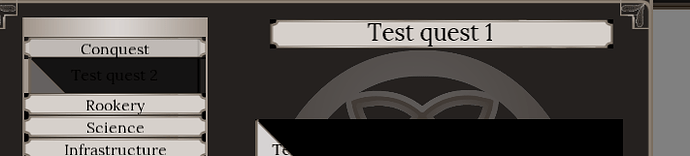

For a project I’m working on, I’ve implemented the nine-part texture technique to reduce the size of the art assets that are stored.

To recombine them I first converted them all to color[], then looped through all of them and reassigned them back to the final texture.

Of course, that is not very efficient, and it was also rather complicated, so I figured it would be easier to draw the textures on a rendertarget instead, and use that as a texture.

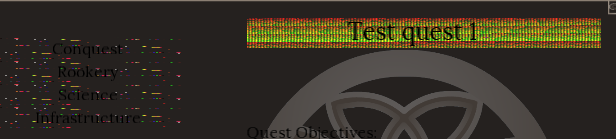

I did specify RenderTargetUsage.Preserve, but when I use this technique to load in assets, I get… well… what seem like random data from the GPU.

Does anyone know why this happens, how I could fix it, or what would be a better method to recombine the seperate textures?

Oh to clarify further, this happens if I use getdata and setdata to a seperate texture (before resetting the rendertarget on the graphicsdevice)

If I simply assign the rendertarget as texture and use if from there, the result is this:

In which it the textures have been cleared completely, which is strange, as I did specify RenderTargetUsage.PreserveContents.

Ok so after experimenting a bit I found out that for some reason no color data is recorded when I draw to the rendertarget.

If I am not mistaken, then this code:

“RenderTarget2D textureAsRenderTarget = new RenderTarget2D(Graphics.GraphicsDevice, TextureSize.Width, TextureSize.Height, true, SurfaceFormat.Color, DepthFormat.Depth24, 1024, RenderTargetUsage.PreserveContents);”

should create a new rendertarget,

this code:

“SpriteBatch.GraphicsDevice.SetRenderTarget(textureAsRenderTarget);”

should assign it properly,

then you should be able to just draw to it with the spritebatch, and after spritebatch.end(), the textures that have been drawn to the rendertarget should be on it, if im not mistaken.

Does anyone know why it is not working?

There are several things that can go wrong.

First try clearing the render target before you draw to it.

You are using a depth buffer on the render target, do you need it?

Are you setting the bound render target to null after spritebatch.end?

Do the textures have an alpha channel? If they do you may have to change the blendstate in spritebatch.begin

Post some code and we may be able to help

Ah no I don’t need the depth buffer, changing it to none doesn’t change anything though

Yes, nearly all textures have an alpha channel, which is why I’ve set the blendstate on the graphicsdevice to alphablend, which seems to work as well

I am resetting the render target to the backbuffer after the end of the spritebatch as well

I also tried to clear it (using graphicsdevice.clear), but that didnt fix it

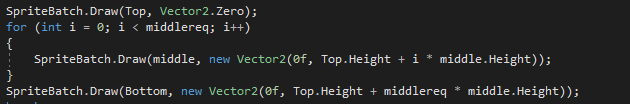

I’ll post a snip of the code, since I’m not really familiar with markdown

So what I do before drawing to the rendertarget is:

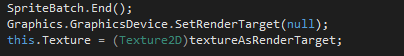

And then to draw stuff to the rendertarget I do:

And then after everything is drawn:

Ok so I might have found one of the issues.

I’d set “this.GraphicsDevice.PresentationParameters.RenderTargetUsage = RenderTargetUsage.PreserveContents;”, as well as defining it at every rendertarget

If I set it only at the rendertargets themselves, or at the presentionparameters, the result is no visible rendertargets at all, but if I dont set it anywhere, the result is this:

Which shows a small part of the textures.

I fixed it!

So one part of the problem was that if I set rendertargetusage to preservercontents both at the presentationparameters and at the rendertarget, it would show nothing

The other was a stupid fault of mine, I declared a vector2 with one argument instead of two, which caused textures to fall outside of the rendertarget

Oh yes and the black was solved by clearing with color.transparent, of course