I have a project with two monogame “clients”, one UWP the other .NET5, referencing the same .NETStandard2.0 library project.

There is very little code in the monogame clients and 99% of what executes per frame is in the .NETStandard2.0 project.

However when I compare CPU usage between the two monogame clients, I’m shocked to see how much slower/more cpu usage the UWP version requires than the .NET5 client.

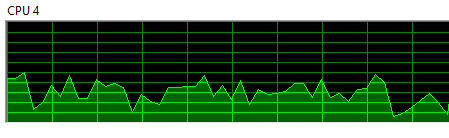

.NET 5 CPU core usage while running the game (Lowest 10%, highest 50%).

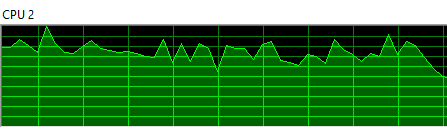

UWP CPU core usage when running the exact same game. (Lowest 65%, highest 100%)

The difference is so huge (about double the CPU usage, if not more?) I know that no optimizing of the code itself is going to get the UWP version where it needs to be (unless there are some massive gotchas I’m not aware of).

I tried copy/pasting the library code into the UWP project (and removing the reference to the library) in the hope that the “.NET Native Toolchain” would then compile everything to Native code and thus run it faster, but there is very little if any difference.

I appreciate this is probably not an issue with monogame but more to do with the compiler, UWP+.NET Native Toolchain just seems to emit code that runs so very much slower and consumes more CPU cycles.

I’m using VS2019 but am considering moving over to 2022 in the hope that it will compile the UWP code for faster execution but I’m not hopeful.

Has anyone else encountered anything like this or have any ideas on what the problem could be?

EDIT: Some extra info:

It appears that copy/pasting the netstandard2.0 code into the client app (to include it in the apps compilation directly) made no difference because that’s kindof what the compiler does anyway during the “.Net Native Toolchain” phase:

From: .NET Native and Compilation - UWP applications | Microsoft Learn

As a result, an application no longer depends on either third-party libraries or the full .NET Framework Class Library; instead, code in third-party and .NET Framework class libraries is now local to the app.

So although both these client apps run the exact same source code (Game1 is shared so only Program.cs is custom per client) the massive difference in execution speed seems to come from either the “UWP environment” (??) or the .Net Native Toolchain doing a terrible job at compilation, or a bit of both.

Yet everything I’ve ever read about Native compilation is always to do with how fast it makes startup times, how slow it makes compilation times and nothing at all about actual code execution speed. That’s why this result is such a shock to me, I would assume that after waiting all that time for it to eventually compile, that execution speed would be at least, similar to or better than a JITTED version.

Google shows this may not be the case though, this chap is having a very similar sounding issue (although of course the problems are so much worse for those of us trying to make fast running games):

https://social.msdn.microsoft.com/Forums/silverlight/en-US/4914d396-dec0-45b0-aa42-4e55128b3ef7/uwp-performance-issues-with-apps-compiled-with-net-native-toolchain?forum=wpdevelop

His “solution” was to downgrade the version of Microsoft.NETCore.UniversalWindowsPlatform but that appears to cause the app to be rejected by Windows Store. It’s possible this is all a huge bug in certain versions of the Microsoft.NETCore.UniversalWindowsPlatform (I’m using the current very latest “stable” version 6.2.14 currently). Perhaps there is a “good build” in some version that the store would also accept. Not good but is at least something for me to have a look at…