Hello,

Im rendering pixel art with a native resolution of 480x270 with aspect ratio 16:9. We can easily make this independent and scale it too any size using black bars to perserve the aspect as explained in this classic article:

http://www.david-amador.com/2010/03/xna-2d-independent-resolution-rendering/

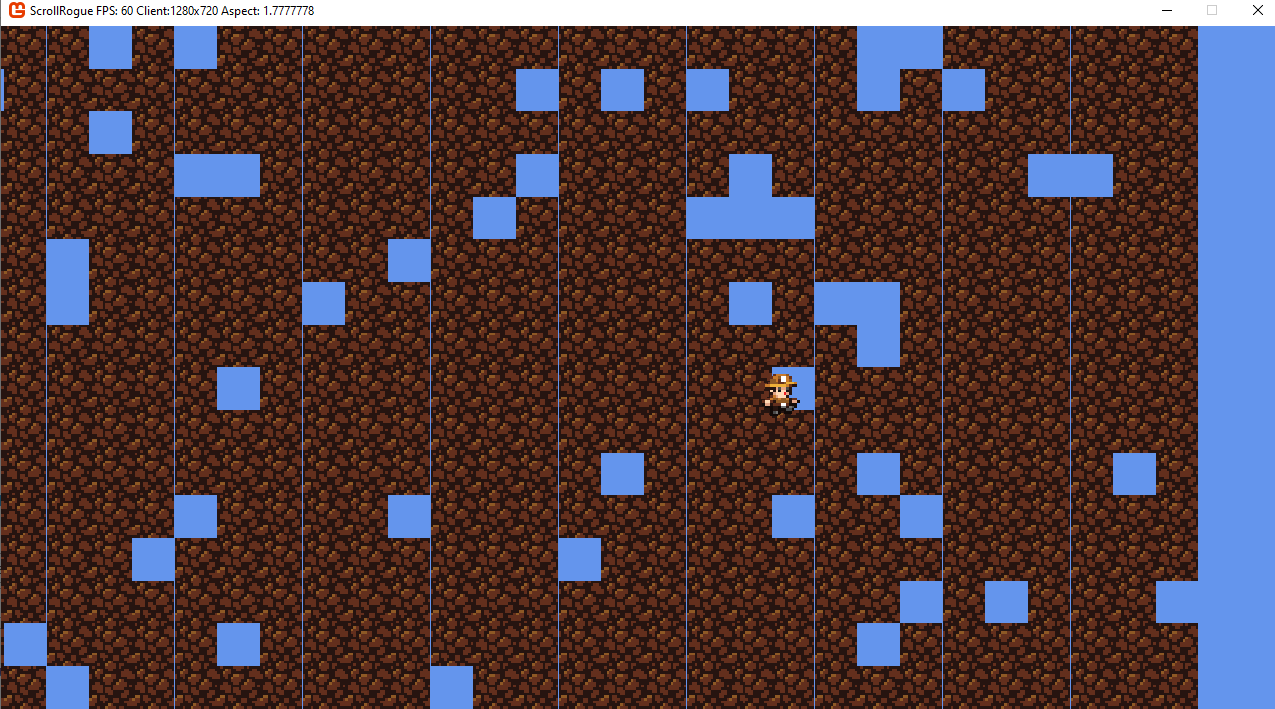

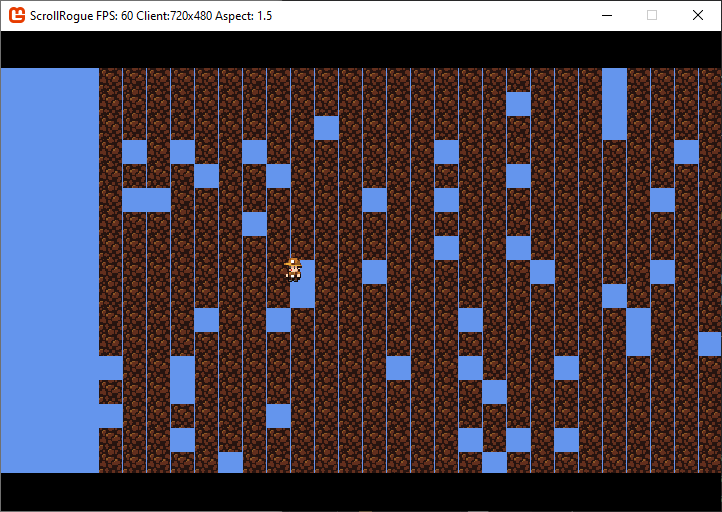

However this creates problems at certain resolutions where my tile map will display with verticle lines in between them and flicker along with pixel decimation. This indicates a floating percision rounding problem usally but i havent been able to solve it.

There is a potential solution but it seems rather silly:

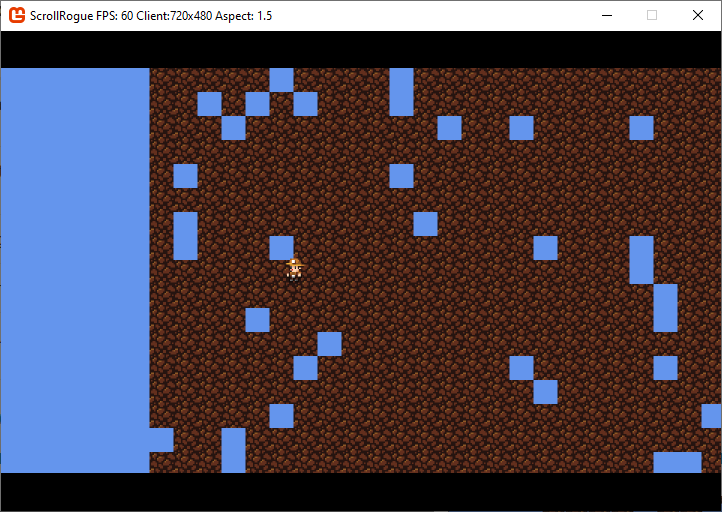

-Render Native x4 too 1980x1080 using scale

-Render Upscaled image too screen by Downsampling too viewport resolution with black bars

Now this does work perfectly for most cases but if a user doesnt have 16:9 1920x1080 display it would become blurry. I could upscale too the highest possible integer avliable on there display and downsample that too there screen but it seems wasteful.

The other benift of upscaling is subpixel camera pcercison movment as the origional articles suffers from jittery camera movment atleast in my case.

If anyone has ideas for solving this in a more elegant way please let me know.

Thanks