Hi all,

I’ve been working on a deferring rendering approach for my game and (originally an XNA project) and I’ve got it all working nicely now but I wanted to add support for soft particles (for some nice rolling fog etc).

My old billboarding system worked ok with forward rendering so I thought I could just modify that shader so it spits out its color/depth/normal to the relevant render target. That seemed wrong since I need to sample the depthmap to make my particles soft. That means I need to do it in a forward rendering pass after everything else (I think).

So what I’m doing is:

- Rendering all my models and terrain to a shadowmap.

- Rendering All my models and terrain to color, depth, normal render targets.

- Rendering my lights to my light map

- Combining render targets with my shadow map to give a final image.

Now I think my step 5 would be from what i’m reading (for alpha blended geometry such as soft particles): - Reuse the depth render target so compare each pixel of my particles to the depth stored in the map and fade its alpha accordingly (so smoke etc fades nicely into the existing geometry).

So that’s the theory hopefully. I just don’t understand how to get the depth of my particle (since they don’t have a worldmatrix like model) or how to read the correct pixel from my depth map.

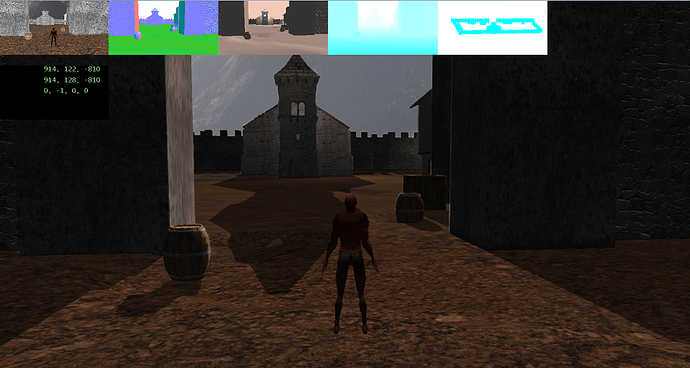

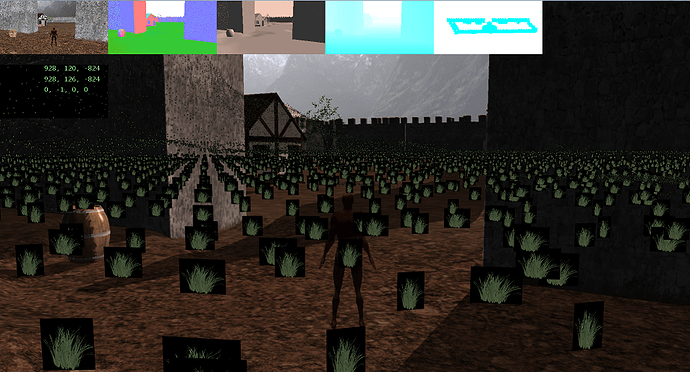

Here’s a screen shot of the problem, I just used grass rather than smoke to make the problem really obvious.

You can see my render targets along the top: Color, Normal, Lightmap, DepthMap, ShadowMap

No Particles:

Particles: