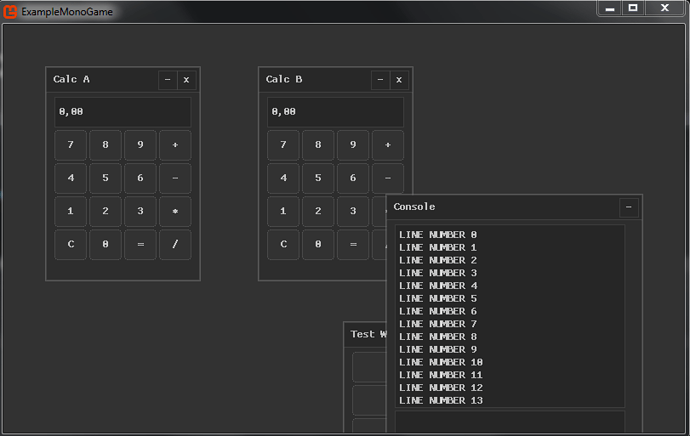

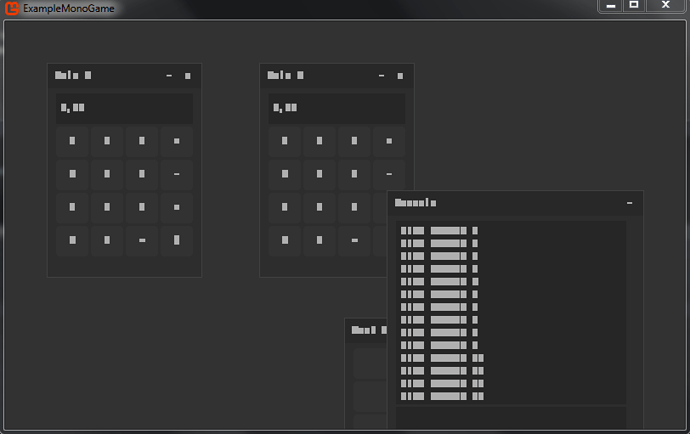

Opaque ya it looks like the background of the image is white with transparency so opaque is gonna be useless.

- What did you get with additive the top one?

- Can you tell nucleak to make the font background color transparent black.

- Is the scaling altered at all for the drawing if it is make it 1,1.

- Pretty sure the real problem is its screwing up the source destination rectangles.

Ah wait, is this the begin call that draws the text too…

spriteBatch.Begin(samplerState: SamplerState.AnisotropicClamp, depthStencilState: DepthStencilState.None);

Ansiotropic can have a heavy antialiasing effect on 2d quads.

If it is get rid of it and replace it with linear if that doesn’t give better results replace it with point clamp and see how it looks.

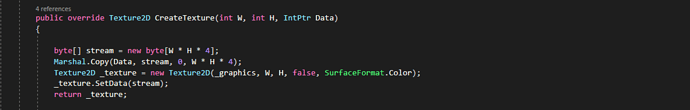

Also System.Drawing is not cross platform it will only work on windows. Are you doing this because it only gives you the intprt for load the font texture?

public override Texture2D CreateTexture(int W, int H, IntPtr Data)

{

Drawing.Bitmap Bmp = new Drawing.Bitmap(W, H);

MemoryStream memoryStream = new MemoryStream(W * H);

Imaging.BitmapData Dta = Bmp.LockBits(new System.Drawing.Rectangle(0, 0, W, H), Imaging.ImageLockMode.WriteOnly, Imaging.PixelFormat.Format32bppArgb);

for (int i = 0; i < W * H; i++)

Marshal.WriteInt32(Dta.Scan0, i * sizeof(int), Marshal.ReadInt32(Data, i * sizeof(int)));

Bmp.UnlockBits(Dta);

Bmp.Save(memoryStream, Imaging.ImageFormat.Png);

return Texture2D.FromStream(_graphics, memoryStream);

}

If you really can’t get around this then your going to be limited to windows anyways at which point you might as well just process the pixels as you load them with a alpha threshold and fully brighten anything over said threshold to fully white opaque and zero out the rgba on any pixels below it as you load it in above.

Or you could get data even then redo it, blahh double work triple really.

God i hate to say this but if your not bound to windows and if nothing works weapon of last resort is to write your own shader and clip low alpha pixels and boost anything above the threshold to fully white pixels, but that is the hackyest of hacks for this type of thing.

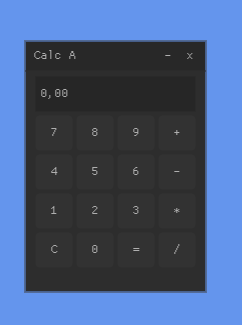

It might just be that nucleax sucks at text. Alpha blend should work in the first place.

I think this is because its destination rectangle doesn’t match the source rectangles width and height per character. But try getting rid of Ansiostropic ecckk this just might be that library.